Accuracy: The degree of conformity of an indicated value to a recognized accepted standard value.1 Accuracy for instruments is normally stated in terms of error (±.05% of upper range value [URV], ±1% of span, ±0.5% of reading, ±3/4 degree, etc.).

William (Bill) Mostia, Jr., P.E., is an independednt process control and engineering consultant. He can be reached at [email protected]

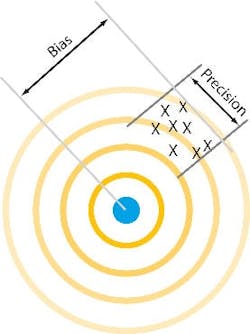

Bias may be known or unknown. An example of a known bias is the deviation of a calibration standard from a National Institute of Standards and Technology (NIST)/National Bureau of Standards (NBS) reference. Large known biases are normally calibrated out. Small known biases are normally compensated out.

Precision errors are considered statistically random. They can be stated as the product of the measurement's standard deviation and Student T distribution, which will provide an error specification to the 95% confidence level.

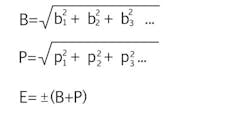

Also random are errors specified for transmitters, calculation devices, constant uncertainty, recorders, input/output devices, etc. Bias and precision errors can be individually combined using the root sum square (RSS) method, then the bias and precision errors can be combined:

where:

E = total probable error,

B = total probable bias errors,

b = bias errors,

P = total probable precision error, and

p = precision errors.

Absolute accuracy: How close a measurement is to the NIST/NBS standard (the "golden ruler"). The accuracy traceability pyramid is shown in Figure 2.

Deadband: The range through which an input signal may be varied upon reversal of direction without initiating an observable change in the output signal.1

Drift or stability error: The undesired change in output over a specified period of time for a constant input under specified reference operating conditions.1

Dynamic error: The error resulting from the difference between the reading of an instrument and the actual value during a change in the actual value. Instrument damping contributes to this error as does measurement and transport deadtime, and the significance depends on the process time constant. Dynamic error must be considered when designing safety systems—if your instrumentation system cannot measure a developing hazardous condition in time for the safety system to react, the process may not get to a safe state in a timely manner.

EMI/RFI errors: Errors due to electromagnetic or radio frequency interference.

Filter error: Error caused by the improper application of a filter on the signal. This error can also be caused by improper settings in exception reporting and compression algorithms.

Hysteresis: The dependence of the output, for a given excursion of the input, upon the history of prior excursions and the direction of the current traverse.1

Influence errors: Errors due to operating conditions deviating from base or reference conditions. Typically specified as effects on the zero and span, these errors reflect the instrument's capacity to compensate for variations in operating conditions. Influence errors are often significant contributors to the overall error of an instrument.

Inherent errors: Errors inherent in an instrument at reference conditions. These are due to the inherent mechanical and electrical design and manufacturing of the instrument.

Linearity: The deviation of the calibration curve from a straight line. Linearity is normally specified in relation to the location of the straight line in relation to the calibration points: independent (best straight line fit), zero-based (straight line between the zero calibration point and the 100% point), or terminal-based (straight line between the zero and the 100% calibration points). Devices are normally calibrated at zero and at their upper range value (URV)—the terminal points.

Overrange influence error: Error resulting from the overranging of the instrument after installation. This is normally a zero shift error.

Power supply effect: The effect on accuracy due to a shift in power supply voltage. This error could also apply to the air supply pressure for a pneumatic instrument.

Reference accuracy: Accuracy, typically in percent of calibrated span, specified by the vendor at a reference temperature, barometric pressure, static pressure, etc. This accuracy specification may include the combined effects of linearity, hysteresis, and repeatability. Reference accuracy also may be stated in terms of mode of operation: analog, digital, or hybrid.

Resolution: The smallest interval that can be distinguished between two measurements. In digital systems, this is related to the number of bits of resolution of an analog signal, (e.g., the analog span divided by the count resolution1).

Repeatability: The degree of agreement of a number of consecutive measurements of the output for the same value of input under the same operating conditions, approaching from the same direction.1 Note that this specification is approaching from one direction and does not include any effects of hysteresis or deadband. The repeatability error specification is the largest error determined from both upward and downward traverses. The field or common usage of this term is closer to the term "reproducibility" than to the ISA definition.

Reproducibility: The degree of agreement of repeated measurements of the output for the same value of input made under the same operating conditions over a period of time, approaching from both directions. Reproducibility includes the effects of hysteresis, deadband, drift, and repeatability.1 This term is an excellent specification, but for some reason, the vendors have chosen typically not to use it for modern instruments.

Sampling error: The error caused by sampling a signal with too low a sampling frequency. In general, the sampling frequency should be at least twice the highest frequency in the signal being sampled.

Temperature effect error: The percent change in zero and span for an ambient temperature change from reference conditions. This can be a significant contributor to the total error.

Vibration influence error: The error caused by exposing the instrument to vibrations, normally specified per g of acceleration and up to some frequency.

References:

- "Process Instrumentation Terminology," ISA-S51.1 1993, Instrument Society of America.

- Measurement Uncertainty Handbook, Dr. R.B. Abernethy et al. & J.W. Thompson, ISA 1980.

- "Is That Measurement Valid?," Robert F. Hart & Marilyn Hart, Chemical Processing, October 1988.

Leaders relevant to this article: