Does distributed I/O architecture make sense?

A Control Design reader writes: As a control designer at a large custom machine builder, I regularly define hundreds of I/O points and create electrical drawings for the systems we provide. Most of these points are discrete but some are analog. During this process, I often struggle with whether I should use local rack-based I/O, break it down to remote I/O racks in field control enclosures or use distributed I/O mounted to the machines. In the past, I have usually kept the I/O local, so installation required running large bundles of wires from the main enclosure to the field devices. However, I am considering changing my I/O system architecture to a more distributed one. I'm sure the use of local I/O is fine in some system designs, but what are the deciding factors on the type of I/O system used? Also, some systems are high speed and complex, so speed of any I/O used is very important, which has always been a concern when using a network cable to connect distributed I/O. I'm sure there are other design considerations, but I'm not sure what they are. Please help understand some best practices when specifying I/O.

Performance, reassembly and cost

I/O decisions (local vs. remote) must be based upon a hierarchy of priorities.

First priority is optimal machine performance. Many complex machines have a relatively small amount of critical I/O from a speed-of-processor-update standpoint. For example, you would not put an encoder on a remote I/O network that cannot be reliably scanned at the fastest update rate needed for deterministic machine operation. But you could very likely use a remote output for a stack light as the timing for that operation is not critical. On bottle filling/capping/labeling equipment that I have seen, there are mixes of local with long runs back to the main rack and remote I/O to satisfy the demands of machine performance.

Second priority is ease of machine reassembly. Large custom machines may need to be manufactured in movable subassemblies. When these assemblies are mated to create the final machine, there may very well be a formidable amount of field reconnection needed. In addition to taking time, this also can create a point of failure. Reducing the amount of field wiring reconnect is good design practice. This is also true if the control enclosures are remotely mounted from the actual machine.

Third is cost. Remote I/O always has a cost associated with just the adapter. The more I/O points you have on an adapter, the lower the cost per point for remote.

An additional factor that must be considered is the I/O network itself. There are several options, depending on the control system; some are fine for slower applications, while others are very fast and can act just like local (rack) I/O from a timing perspective (first priority). It is best to research remote I/O networks, as it can be a deciding factor in overall I/O layout.

For example, the newest machine we manufacture has a local rack inside the control enclosure that is actually a remote rack, in that it connects to the controller over a network. The processor and cards slide together, creating an assembly that looks local, but the communication to these cards is over the EtherCAT network. We also can have a small amount of remotely mounted cards also inside the machine-mounted enclosure that use an adapter with a network cable to attached to the main card assembly, depending on the options ordered. As you can see, the performance of this particular network has made the first priority rather meaningless.

Mike Krummey, electrical engineering manager, Matrix Packaging Machinery, Saukville, Wisconsin

Slow vs. complex

Remote I/O is perfectly fine 98% of the time, as it does save longer wiring runs, which can be costly. As long as you are controlling things that are "slow" on remote I/O blocks—relays, blinky lights—remote I/O is fine.

If there are any signal speed requirements for a faster response to or from the PLC, which is sometimes needed for a safety system feature or possibly the analog signals, then these items should go to the I/O rack where the PLC is located as local I/O.

Keep anything "complex" on local I/O, as well. I wouldn't run I/O to a robot or vision system or anything like that on remote I/O blocks. In general, my personal preference is to run all analog signals to local I/O, as well, as you will be sure to have enough response speed available directly from the PLC for the analog control system. It always depends on the system, of course.

Gray Robbins, senior control system engineer, Toward Zero, Indianapolis and member of Control System Integrators Association (CSIA)

Cabinet- vs. machine-mounted

With available industrial network technologies, it just doesn't make sense to pull copper wires out to devices. These networks are fast enough for any application; they are fast enough for precision, high-speed, synchronized motion control. There are even I/O slices capable of a 1-microsecond response time using onboard intelligence rather than going back to the PLC's CPU.

Perhaps the main consideration is whether to use cabinet-mounted I/O, machine-mounted IP67-rated I/O or a combination. A base machine configuration might see the I/O housed in the cabinet and options or lengthy conveyors equipped with machine-mounted I/O. Note that the network cable can also perform the role of networked (vs. hardwired) safety, safe motion and safe robotics, providing huge performance and diagnostic benefits—both widely adopted in Europe but relatively unknown in North America, aside from the most progressive OEMs.

The same network can also be used to control machine-mounted motor/drives and motor control modules out on the machine. The motor/drives typically offer the ability to connect I/O on the network, and the motor control modules are being used for applications such as automatically adjustable conveyor guide rails for fast, precise changeovers.

There are so many networked I/O functionalities available—from strain gauge to energy monitoring modules—there is really no reason to hardwire I/O even if the I/O count is low.

That said, there is also no reason to run expensive machine-mounted fieldbus I/O module cables out on the machine. A simpler network implementation is to extend the PLC backplane communications out via cable to the remote I/O modules, a solution that's been commercially available for many years.

Final word—your I/O and safety network technology should be open source and recognized by standards bodies; and you should therefore not have to pay license fees or royalties.

John Kowal, director, business development, B&R Industrial Automation

Count the I/O points

In these applications, I usually consider all options in a machine design. If I/O signals are located semi-close to the main control cabinet, I usually continue to parallel-wire them. For the rest of the machine, I intermingle IP20 and IP67 I/O. If the I/O location has fewer than 16 digital I/O points, I consider IP67 direct machine-mount I/O. While the modules are more expensive, they are still cheaper than installing and wiring junction boxes. If the locations have higher I/O counts along with mixed signal types, I go with IP20 in-cabinet I/O. In-cabinet I/O options are usually more flexible and can be customized to match you I/O needs.

The last piece to consider is the layout of the industrial network. With this being a new machine design, I’m making the assumption you are going with an industrial Ethernet protocol. Ethernet is great because of its flexibility, but you need to be careful what other devices are attached to the I/O network. While it is true you lose some throughput with industrial Ethernet networking, this time only has a minimal effect on the application, as long as you are not overloading the Ethernet network with noncritical data. The key is to keep your I/O network isolated from the rest of the network on the plant floor.

An easy way to do this is to place the network security appliance between the machine and the rest of the network. These devices block unwanted data from the plant network affecting the network bandwidth of the machine.

Jason Haldeman, lead product marketing specialist—I/O & light, I/O and networks, Phoenix Contact

Embed the I/O

Another option for applications with limited space is to embed the I/O directly on a PCB. It is possible to design a circuit board whereby I/O terminals can be connected without the need for individual wiring—the wiring is designed into the circuit board traces. Traditional wiring processes are replaced with error-free pluggable connections. This option is ideal for high-volume, repeated machine designs where incorrect wiring must be eliminated and installation time reduction is a major goal.

Machine-mountable “box” I/O also brings the added benefit of easy access to the I/O module, which makes troubleshooting much simpler. If everything is sent back to the electrical panel, tracing the wires could be difficult, especially if they are not labeled properly. However, some applications may not have easy access to different parts of the machine required for I/O mounting. In that case, a remote I/O box would be a better choice, though this may incur additional wiring and installation cost and must be considered.

The bottom line is to use local and remote I/O solutions where each makes sense. It really boils down to application requirements and making the best use of the technology to improve efficiency and reduce costs.

Sree Potluri, I/O product specialist, Beckhoff Automation

Did you fix it yet?

If the system has several IO points that can be logically divided into groups, then there are advantages to designing a modular machine consisting of a main control panel and various smaller subpanels. Each subpanel would consist of remote rack-based I/O, where all I/O wiring is close to the field devices. This distributed setup reduces the conduit sizes and helps to simplify the wiring diagram—you will make fewer wiring mistakes, spend less time wiring and can easily make last-minute changes without affecting the rest of the machine. If any issues arise after the machine is deployed, OEMs have the option to quickly replace only the subpanel in question to keep the machine running. You can then troubleshoot the faulty panel off-site without people over your shoulders asking, "Did you fix it yet?" A distributed approach also provides flexibility to add upgrades and new features or accommodate unforeseen changes in the production environment. For complex systems that require fast response times, choose a deterministic network that maintains network integrity without much tuning or expensive managed switches. For example, CC-Link IE Field is a 1-Gbps industrial Ethernet network that transmits large amounts of I/O data in a repeatable time frame and without data collision, regardless of how much explicit message is being transmitted over the network. As a result, you will have reliable and accurate control.

Deana Fu, senior product manager, Mitsubishi Electric Automation

Consider cost, space and installation

To decide which installation concept is the best fit for your custom machines, you need to take several factors into consideration. These include cost, space and installation time/effort.

Local, rack-based I/O may be the best solution for small machines, but it has several disadvantages when used on medium and large machines. Although the hardware costs for local, rack-based I/O are typically lower than a remote I/O solution, hidden costs such as assembly time and troubleshooting make this option the most expensive.

A remote I/O solution reduces cost and space needed inside the main cabinet since it reduces the quantity of both terminals and protection mechanisms. It reduces wiring efforts, and troubleshooting time is decreased, as well, by the level of diagnostic information offered by the remote I/O devices. Additionally, a solution like this allows you to assemble a machine in parts or to split the machine for transportation. Remote I/O racks do require extra space for enclosures. Additional terminals are required for power distribution and grounding shielded cables. This is a centralized solution that can help to reduce the length of peripheral cables when combined with passive distribution boxes.

Distributed I/O solutions require a larger investment in hardware. But, on the other hand, they reduce assembly time and increase the availability of your machines,often by more that 40%. In combination with pre-wired cables, they drastically reduce wiring mistakes. The I/O devices can be mounted near the peripherals, and expansions are much easier to make.

The best network for your machine is really dependent on the application. When making your selection, take the following factors in consideration:

- What is the cycle time required for the application?

- What topology fits the machine better?

- How many devices will be connected?

- What level of diagnostics is required?

These are just a few of the factors you should take into consideration.

Rafael Calamari, product manager—fieldbus, Murrelektronik

5 Factors

Remote I/O systems are designed to not only simplify some of the design challenges faced by machine builders, but also to ease integration and provide increased performance and productivity.

Using distributed or remote I/O gives the machine builders the ability to achieve the best of both worlds—local I/O for those points that are close by and an extension of the local bus to the remote locations to decrease the number of cables that have to be run back to main cabinet.

When choosing a distributed I/O system, keep in mind some of these factors.

1. Bus protocol: This factor not only plays a role in overall speed of the system, but also how much expansion can be done when integrating the machine into a plant network.

In newer distributed I/O systems, the actual speed limitation is the bus protocol. Serial-based protocols such as Profibus/DeviceNet/CANopen are considered much slower, as compared to Ethernet- or IP-based protocols. The distributed I/O systems themselves have a very fast response or scan time. As long as the network cable runs are within the set limits, the local or main controller never sees any delays in response time.

2. I/O mix: This is where it really does get easier and, in many cases, less expensive to use distributed I/O as opposed to local I/O. If using local I/O, the size of the cabinet has to grow in order to accommodate I/O. As well, some cards are very specific as to whether they are inputs or outputs and typically are not easy to configure for different inputs—not to mention, the cost of the cabinet grows and the cost of the cabling is to be taken into account, as well.

Distributed I/O gives the user the ability to keep the central panel to a manageable size and use either smaller IP20 remote I/O solutions or IP67 distributed I/O solutions.

3. Modularity: Distributed I/O systems can be as small or as big as needed. Expansions and higher densities are a given to reduce costs and space. If more power is required, simply add a power block. If the I/O needs to be changed, simply change out the electronics, and program edits are also easily done.

4. Troubleshooting and uptime: How fast do you need to be up and running again in case of a failure? With distributed I/O systems, the electronics are typically easily exchangeable without having to rewire the card itself. No special training or programming requirements needed.

That is normally not the case with a lot of local I/O installations. The full machine has to be taken off-line for longer periods of time as compared to a distributed I/O system. In a distributed I/O system, if there is faulty input or output simply swap the electronics out while the wiring harness stays in place and you’re up and running again. The Web server allows technicians to log in and remotely troubleshoot any issues before the decision is made to do a hardware swap. The same thing applies for the IP67 solution, and the advanced diagnostics in distributed I/O systems allows for more accurate troubleshooting—specific input or output short circuits or open loads instead of just a fault.

5. Inventory and customer design: With local I/O systems, if a customer has a specific protocol requirement, as the machine builder, the headache of changing the design and rewiring the I/O and where the sensors are placed is an absolute nightmare.

Distributed I/O to the rescue. Because distributed I/O relies on a main controller in the local I/O panel, the field I/O has very limited smarts. This actually allows the machine builder to keep the I/O wiring and placement of the sensors the same. All that is required is to change out the protocol coupler or head unit of the distributed I/O system and the design is done. To the controller, the field or distributed I/O looks like it’s just an extension of its onboard I/O, and nothing is affected. This greatly reduces the cost of inventory for the machine builder because it can have a cookie-cutter design template and just change out the protocol head on an as-needed basis.

Building or expanding industrial control systems to provide remote-monitoring capabilities can be a challenge. By choosing the right equipment, you can save a lot of time and effort, now and in the future. The points noted above are some of the plus points, and by choosing a reliable and fast distributed I/O system you can choose the best equipment for the challenges faced by reducing wiring efforts, as well as save time, money and work effort.

Andrew Barco, business development and marketing manager—North America, Weidmüller

The integration link

When you are looking at the I/O, it is very important to look at the whole picture: the total integration picture. What we mean, and as you described, is the process of laying out a machine requires design time, assembly time, debug time and controls development time, plus the actual cost of the components. Based on the description you provided it appears that in most cases you opt for the central controls cabinet, bringing all the I/O to one single location with a rack-mounted structure. In the design phase, a typical challenge occurs when you need to draw out and define each and every wire and its terminations. Then comes the assembly phase, where you have to physically route, cut, strip crimp, label and terminate each wire. With about 100 sensors, you are talking about a week of wiring time that is in addition to the panel build time. Another drawback with this approach is that you do not have any diagnostics information about short circuits and over-currents or miswirings. Debugging the system takes time and adds no value to your machine besides increasing the cost of assembly. All things considered, a network-based, distributed-I/O architecture appears very appealing.

While the network-based approach offers diagnostics and connectorized solutions that reduce your assembly time, the cost can go up quickly. A typical network I/O block offers only a few points of discrete or analog I/O. The few points could be eight or 16. In your case, when you are looking for a solution for hundreds of I/O points, every 17th I/O point requires a new network node. Another separate network node would be needed for the analog I/O. Every time you add a network node, you need a more expensive network cable and power cable. Even when the network I/O block appears cost-effective, you may end up with a more expensive solution that may not be scalable to your application need (Figure 1).

Figure 1: Every time you add a network node, you need a more expensive network cable and power cable. Even when the network I/O block appears cost-effective, you may end up with a more expensive solution that may not be scalable to your application need.

(Source: Balluff)

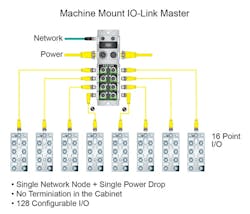

There is a newer—about 10 years old—technology out there that could prove beneficial to your controls architecture. The technology is called IO-Link (www.io-link.com). This is the first standardized (IEC 61131-9) I/O communication technology designed to unify sensor-actuator communication over a standard three- or four-wire sensor cable. On a single network drop you could connect up to 128 I/O points that could be inputs or outputs or any combination thereof (Figure 2). The network gateway is referred to as the IO-Link master, and the I/O hubs are the IO-Link devices that connect to the discrete sensors or actuators. Each I/O hub can be up to 20 m away from the master. Each open IO-Link port on the master can be connected to a multitude of devices. Some technology providers offer analog I/O hubs that can collect multiple analog sensors and bring them through a single port of the IO-Link master. Or you could simply choose a sensor that is IO-Link capable. Since the I/O hubs do not have network node, and power can be transmitted through the same communication cable, the IO-Link-based architecture usually is a cost-effective solution. The savings for component costs are better when compared with a network I/O approach, and total integration time is a lot less compared to your existing approach. Additionally, all ports are short-circuit-protected; all diagnostics are conveyed through the IO-Link master to the controller, simplifying the total integration of the system.

Figure 2: On a single network drop you could connect up to 128 I/O points that could be inputs or outputs or any combination thereof.

Now, the second part of your question: the speed of communication for the high-speed requirements of the machine. You are absolutely correct when you implied that network I/O adds additional time because of the network delay. The industrial network technologies have been prominently used on variety of high-speed machines for more than 15 years. There are several industrial-network options, including Gigabit-Ethernet-based EtherCAT and CC-Link IE. With IO-Link architecture, the I/O cycle time could be about 5-10 ms in total from sensor to controller to actuator. In the case of analog sensors, it could be a little higher than that. The suggestion here is that if the I/O cycle-time requirements are less than 10 ms, it would be a good idea to separate the I/O that is essential for real-time processes and and the I/O for non-real-time processes. The I/O required for real-time processes could be kept the same as today, but the rest of the I/O could be consolidated with IO-Link architecture.

Figure 3: Since the I/O hubs do not have network node, and power can be transmitted through the same communication cable, the IO-Link-based architecture usually is a cost-effective solution.

(Source: Balluff)

Shishir Rege, network marketing manager, Balluff

Single cable

Even though the basic concept of I/O is simple—signals in and signals out to accomplish a task—there are myriad factors to consider during the process of component specification. Availability of space, layout of the machine, accessibility of the machine, equipment budget, length of cable runs, required signal types and accuracy requirements all factor into the decision-making process.

The most common practice is to install all of the I/O in a control cabinet and run cable to route the signal to the necessary location. These devices are generally DIN-rail-mountable modules that are IP20-rated and offer diverse connectivity and functionality options. Depending on the number of signals, this type of solution could require a larger panel, which increases equipment costs and demands more space. In addition, if the cable runs are too long, particularly when dealing with analog signals, the system loses precision. These types of applications are where remote and machine-mountable I/O could be beneficial, as the signal from the field device remains very close to the distributed I/O.

Choosing the correct communication protocol is also important in the design process. The ability to seamlessly access and diagnose each channel on every I/O module from your main controller via a single interface offers significant value. A capable protocol in this context is EtherCAT—a deterministic, high-speed Ethernet-based fieldbus that provides synchronization and diagnostics down to the individual I/O modules. The newest expansion of this technology is EtherCAT P, which combines power and communication into a one standard Ethernet cable. EtherCAT P facilitates much greater system simplicity, as it only requires a single cable run to connect back to the controller.

Andy Garrido, I/O product specialist, Beckhoff Automation

Be flexible

When deciding on an I/O configuration, there are a few things to take into consideration.

Environment: Applications in a noisy area will require more robust I/O such as CANbus or other twisted-pair and/or shielded wiring. High humidity, heat, vibration or other environmental conditions would require protection of local connections or the implementation of the remote I/O.

Budget: If cost is a factor, using a distributed I/O with multiple controllers might be out of the question. At the same time, the cost of running long cable can amount quickly, as well.

Volume of I/O: Having a high volume of high-speed I/Os would not fare well over an Ethernet, causing excessive network traffic, ultimately affecting performance of the system. Using local ports or distributing them amongst other controllers may be more efficient, depending on the application.

Type of I/O: High-speed inputs and outputs such as encoders and stepper motors with hardware-level processing must be connected locally or to a remote module somewhere in order for proper function.

Flexibility of infrastructure: If you anticipate potential system growth over time, then using RS-232 doesn’t bode well for scalability as it is a 1-to-1 connection. It might be better to setup an RS-485, CANbus or Ethernet network instead.

James Cappelletti, application engineer, Unitronics

ALSO READ: Discovery of the missing IO-Link

Close to the process

It depends on the application. We believe that the highest system performance and reliability can be obtained in systems involving many I/O functions through distributed processing, the practice of placing intelligent control elements as close as possible to the process being controlled. With local controllers handling time-sensitive closed-loop control processes, the need for high speed in the fieldbuses that connect the distributed controllers to central computing resources such as PLCs is reduced, and the bandwidth required is limited if communications are driven by events such as sequence changes or motion profile/program changes, rather than individual motion operations. A fieldbus such as EtherNet/IP generally has more than enough performance to handle supervisory control, data acquisition and HMI data display functions when it is not burdened with the need to pass feedback and control data within the constraints of control loop timing. The advantages of using a fieldbus compared to discrete wiring is that wiring costs are lower, and system reliability is increased and maintenance costs are decreased due to having fewer connections to go bad.

Incorporated in this response is the advice that it is generally not an optimal control solution to have a central PLC performing time-critical and precise control functions in a large machine, even with high-speed point-to-point I/O solutions. PLCs are valuable but designed more for general-purpose computing and supervisory control functions, rather than direct machine control. Dedicated distributed controllers are optimized for the tasks they perform, such as closed-loop motion control, and therefore offer better performance for those tasks, while promoting lower overall system cost. And, with distributed computing elements sharing the workload, a less-costly supervisory PLC can often be used.

Bill Savela, PE, marketing director, Delta Computer Systems

Don’t drop the voltage

Some of the factors that go through my mind when deciding whether or not to use distributed I/O are the following:

- proximity of the devices to the local rack

- quantity of items

- shipping breaks

- voltage drops

- speed of communications.

The largest determining factors in using distributed I/O is how far the devices are away from the local rack and whether there are enough points of I/O in the same vicinity to warrant:

- the added cost of a communication module

- the added cost of potentially a new enclosure

- the design time to develop a separate I/O control enclosure or distributed I/O scheme.

So what is the distance-to-quantity ratio? In general, once we get out about 50-75 ft from the local rack with about eight to 12 points, we’re considering a distributed I/O solution. At that point, the savings in wire and routing simplification begin to offset the additional hardware and design costs incurred.

If a shipping break is involved, unless there are very few I/O points beyond the break, a distributed I/O structure is a fantastic advantage over home runs back to the local rack. The reduced time to break down the machine, set up the machine on-site and debug on startup typically makes the additional cost of the distributed I/O seem like peanuts. The reduced documentation and reduced hardware cost for one less pull box per shipping break can also add to the appeal of distributed I/O.

Another reason for using a distributed I/O scheme would be to mitigate the risk of voltage drop. Our designers get nervous anytime you have low-voltage (24 Vdc) connections more than 200 ft away from the source. At that distance, we start watching our device loads like a hawk, knowing that we’re in the range where voltage drop can start to make things stop working. If we have only one or two sensors out there, we may just make sure we’re within tolerance, but, if we have enough out there to fill an I/O module or a brick of I/O, we’re going to recommend distributed I/O so that our reliability can increase.

Regarding speed of communications, you just need to make sure that your I/O update time is less than your fastest signal. There are several factors that we watch when determining which signals to take to the distributed I/O.

- What type of network are we using?

- How many devices are we communicating to?

- What are the run lengths of the communication cables?

- What speeds can our network switches and other network infrastructure support?

As an example, newer Ethernet networks with five or six communication modules attached to a switch or two and with run lengths around 100 ft can usually handle 20-ms I/O update speeds. If you add more wire length and devices to the network, then 50 ms becomes the new level to start watching more closely. So, if you have some signals that need to be faster than this, those would need to go to the local rack.

Determining what model of distributed I/O to use just comes down to the types of signals we’re dealing with and what makes the most sense for the application. Around here, we see a lot of Allen-Bradley Flex I/O remote racks, and a fair amount of Allen-Bradley Point I/O mounted in remote enclosures. This is because the number and type of signals we’re dealing with are varied, and we like the flexibility of the platforms. I think that the ArmorBlock style of distributed I/O would work great in a conveyor-type application where you have fewer points per group and simple devices such as limit switches, proximity switches and solenoid valves—things that essentially take one cable to connect and don’t have complicated power and wiring needs. I say this because the block-style I/O doesn’t allow a lot of flexibility in separating power for the devices, so, if you need something more complicated than just power for outputs and power for inputs, an ArmorBlock setup may not be what works best for you.

Donavan Moore, lead electrical designer, Concept Systems, Portland, Oregon, and member of CSIA

What’s the scale?

Unfortunately there are no concrete rules defining when to implement a distributed I/O control system; however, there are any number of reasons to opt for either a hardwired or distributed I/O architecture. Typical criteria include design, hardware and installation costs, system expandability, layout scope and/or limitations, diagnostic capability, maintenance considerations, knowledge and acceptance.

A primary factor in the decision process is scale. Generally, as a system grows in physical scale and I/O points, the cost/benefit analysis tends to shift in the direction of distributed I/O. Likewise, the variety of signal types needed—particularly specialty signals such as certain analog inputs, high-speed counters, RFID, serial, IO-Link, safety—may itself dictate the need for the flexibility offered by the vast range of fieldbus devices currently available on the market. It is also possible that other application-specific challenges may be aided by the use of a single bus, as opposed to bundled individual conductors. These include complexity of cable runs (junctions/transitions), weight and/or size limitations and dynamic installation (C-track/pivot points).

There are a variety of fieldbus protocols available, each with its own defining benefits and/or drawbacks, which do influence when a particular distributed I/O system is most suitable. Selection of a controller capable of supporting multiple fieldbus options or implementation of protocol independent I/O suppliers may help to extract the most value from distributed I/O systems.

Each case is somewhat unique—fortunately there are plenty of resources available to help users to make an informed decision.

Dan Klein, product manager—fieldbus technology, Turck

Less noise, interference and signal loss

You are correct, in that there are many factors to take into consideration when determining which type of I/O implementation to use within an application. While centralized/local I/O has advantages such as the hardware is removed from the rigors of the testing environment and it’s easier to maintain, it also can introduce excessive cost and complexity to your I/O system, based on the long runs of sensor cable. In addition, with the trend of systems increasing in complexity, it’s causing these lengthy wiring schemes to become more difficult and costly to implement. By implementing a distributed system, you can place the I/O close to the system reducing wire cost and only run a single communication cable from the I/O, but more importantly increasing measurement accuracy because the shorter sensor cables are less prone to noise, interference and signal loss. For a distributed approach, localized signal conditioning and I/O are required due to the nature of the system, but other factors such as the ability to synchronize I/O across all the distributed nodes, ruggedness, communication bus and onboard processing/storage can be selected and customized to meet your application needs.

Tommy Glicker, product manager, data acquisition & control, National Instruments

Active with logic

The more complex the system, the better the argument for distributed machine-mounted I/O. Just looking at the cost of installation, the advantages are huge. The connection of sensors and actuators to distributed active I/O blocks using prefabricated double-ended cordsets is four times faster than terminating discrete wires to terminal blocks inside of an enclosure. But the benefits don’t stop there. Troubleshooting time is also reduced. The prefabricated, pre-tested cordsets virtually eliminate wiring errors and the time required to correct them. There are also benefits after the installation. Having to replace a 2M double-ended cordset from a sensor to an active I/O block can be accomplished in minutes. If the system is wired using local rack-based I/O, it can take hours to trace the cable back to the enclosure, find where it is connected inside the enclosure, remove and then replace the cordset. This process also means the enclosure must be opened, which usually requires the complete shutdown of the machine and a call to the electrician to do the work. Hours could be spent just waiting for the electrician to arrive.

Although Ethernet-based active I/O systems do operate at high speeds, very large systems could suffer from a longer-than-required response time. This can be addressed by the recent introduction of active I/O modules that incorporate their very own logic controllers. These distributed control units (DCUs) reduce the response time to 10 ms or less and can function independently or in cooperation with the central PLC. Using DCUs offloads the main PLC so that the overall system can run faster.

DCUs can also be used to control small systems instead of a central PLC.

The use of distributed I/O also reduces the size required for each enclosure. Rows of terminal blocks can be eliminated, because the only connection required is an Ethernet connection to the PLC. Using distributed I/O also means the enclosure can be built in a panel shop rather than wiring it on the factory floor. This typically means fewer wiring errors and additional savings in installation time.

The transition to distributed I/O can be a major change in design philosophy, but the benefits are large and will pay dividends well into the future.

Tim Senkbeil, product line manager, Belden Industrial Connectivity

Price, engineering and late changes

Here are a few considerations.

Price: If you are just considering the cost of I/O itself, a centralized or multi-channel module approach may be your best bet. Added savings can be had with traditional remote solutions using protocols such as Profibus for remote I/O. However, as you indicated, when you consider the overall project with its multi-core cables, routing design, conduit, cable trays and wiring labor, an Ethernet/network-based solution will be lower-cost overall.

Engineering efficiency: In some Ethernet I/O solutions, the actual installed I/O can be automatically scanned and configured using data imported from the I/O list—for ranges, for example. Test applications can also be automatically generated to allow for parallel work, such as loop checks, to be done. Later this configuration can be automatically digitally marshalled with the actual control strategy configuration.

Late changes: Single-channel I/O solutions on Ethernet with digital marshalling capabilities can handle late changes in projects, such as re-partitioning what runs in which controllers or changing I/O types, by reducing the amount cabinet rework/rewiring.

Roy Tanner, product marketing manager, control technologies, ABB

Mike Bacidore is the editor in chief for Control Design magazine. He is an award-winning columnist, earning a Gold Regional Award and a Silver National Award from the American Society of Business Publication Editors. Email him at [email protected].

About the Author

Mike Bacidore

Editor in Chief

Mike Bacidore is chief editor of Control Design and has been an integral part of the Endeavor Business Media editorial team since 2007. Previously, he was editorial director at Hughes Communications and a portfolio manager of the human resources and labor law areas at Wolters Kluwer. Bacidore holds a BA from the University of Illinois and an MBA from Lake Forest Graduate School of Management. He is an award-winning columnist, earning multiple regional and national awards from the American Society of Business Publication Editors. He may be reached at [email protected]