Will deep learning make other machine-vision technologies obsolete?

Heiko Eisele, president of MVTec, which provides machine-vision software and services, presented at the Advantech IIoT Virtual Summit, in September. Eisele’s presentation was part of a general session on U.S. industrial equipment manufacturing, with additional presentations on using edge intelligence, navigating cybersecurity concerns and the newest trends in machine vision technology.

For his presentation, “The Eye of Industry 4.0 with Vision Intelligence and Machine Learning,” Eisele asked: “Why all the hype about deep learning? People almost expect some sort of magic from the technology.” His presentation explored the ways in which deep learning can advance industrial automation and its limitations.

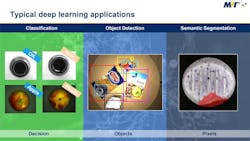

Classification, object detection and semantic segmentation

Typical deep learning applications can be divided into three main categories: classification, object detection and sematic segmentation. Eisele said deep learning can use image classification, for example, to determine whether a part is good or bad, from only the image. Another application is object detection, which means not only classifying images, but also identifying objects within the image and determining their positions. Lastly, deep learning can be used for image segmentation, such as the rejection of pills in a production line, based on the size of a surface defect.

“How does all that magic work?” Eisele asked. The most basic concept of deep learning is training, he said. Users provide labeled data; in the case of image classification, many images must be labeled and identified, so the system can identify defects and a good or bad part.

“Likewise, if you’re looking to use deep learning for object detection or semantic segmentation, you also have to provide labeled images, basically giving the information that you eventually expect the deep learning network to determine on its own,” Eisele said. Typically, in order to train the deep learning network, the system needs a minimum of 20 to 30 or up to several hundred images, depending on the application, Eisele said. For training, the input data—the image and associated label—is matched to the desired output.

Typical deep learning applications can be divided into three main categories: classification, object detection and sematic segmentation.

“The network is optimized to make a statistical model that is supposed then to make decisions just based on plain images autonomously,” Eisele sayid. “Once the training has completed, the expectation is that all you have to do is feed in an image into the deep learning network, and then the deep learning network should make the decision for you.”

A good way to determine whether deep learning is the right approach or not, said Eisele, is to ask yourself: as a human would you be doing a good job at solving the task?

As an example, Eisele considered a diamond for identification and classification. “If a human was asked to determine the angle of the edges between the tip, a human could estimate between 90° and 105°. However, if asked to determine the accuracy of the angle within a half a degree, that would be a more difficult task for a human alone,” Eisele said. “If high accuracy is required, most likely deep learning would not be the right approach, and you would be better off using classical machine vision like edge detection to determine the angle based on simple geometric relationships.”

[javascriptSnippet ]

Traditional pattern matching vs. deep learning object detection

Another common machine vision technique is pattern matching, which locates objects in the image and associates that location, for example, to robot coordinates, so the object can be grasped by a robot. To show position recognition with 2D template matching, Eisele presented six examples of repetitive images, which makes pattern matching a good approach.

“Here’s how it works: You take an image of the pattern you’d like to find, and basically the algorithm behind the scenes learns its geometric features, for example, and then that part can be found on a pile of parts, and you get the position and orientation,” Eisele said. This “simple and straightforward” technique is used in at least half of machine vision applications, if not more, he said.

The deep learning solution is also easy to use, Eisele said. It can be trained or adjusted by engineers in production without machine vision knowledge simply by taking a picture of a part and hitting a training button. “Everything else happens behind the scenes,” Eisele said.

“When would a classical approach be better, and when would deep learning be better?” Eisele asked. “There is an important difference between classical pattern matching and deep learning-based object detection, and that is, in classical pattern matching, you train one template and then you search for that same template in the image. You can, of course, also train multiple templates and search for multiple objects at the same time, but in essence the training and the run time scales with the number of objects you’re trying to locate. So, in other words, traditional pattern matching in not a classification tool. And here’s where the power of deep learning comes in. Deep learning cannot only locate objects, it can also identify and classify them at the same time so, no matter how many different types of objects you’re trying to locate, as long as you have trained them all, you can do that in one approach basically without any influence on the run time,” Eisele said.

Other scenarios where traditional pattern matching would not fare well would be in identifying multiple instances of an object from many angles; objects that are overlapping beyond 50%; or different multiple objects at the same time.

What applications might be better suited for traditional methods? Deep learning requires many images of an object for proper training. If you want to retrain quickly on the fly during production, deep learning would not be a very convenient approach, Eisele said. Another downside of deep learning is the accuracy. “Generally, if you’re using traditional pattern matching, you get better accuracy, in regard to the position orientation of the part, than you would achieve with deep learning,” Eisele explained.

Typical deep learning applications often involve defect classification. “For example, you’re looking at inspecting glass for quality and, in particular, you would like to classify the defects within that glass to help derive some knowledge about what went wrong in the production process,” Eisele said.

For starting a new production run on a part that has never been produced before, it would be difficult to gather all the needed data for training a deep learning network. Without defect examples, the deep learning system cannot be trained. “A common complaint I hear from customers, and rightfully so, is that deep learning is a black box. Once a deep learning network is trained, there’s little you can adjust. The only way you can change its behavior is to retrain it, using a different image set,” Eisele said.

Code reading is used across almost every industry, for tracking parts through production to delivery, and the increase in ecommerce has placed additional challenges on logistics. Labels that are wrinkled, torn or hidden behind plastic wrap can be quite challenging for code reading, Eisele said. “This is an area where deep learning and traditional methods meet,” he said. Deep learning would be able to locate the codes in the images, but in an application like barcode reading, the system still needs to determine the width of the bars and the gap between the bars and for that step classical machine-visions tools are still used today,” Eisele said.

ALSO READ: Vision is not always clear

Is deep learning the holy grail of machine vision? “Deep learning certainly is a very powerful technology, but it certainly also has its downsides,” Eisele said.

“Deep learning is another tool in your machine vision toolbox, and it really comes down to selecting the right tool for the task. Don’t get hung up on the technology, and really try to determine what is the best suited for the particular application you’re trying to solve,” Eisele said.