Common pitfalls in machine vision lighting

- when to consider vision inspections

- bad project scope

- testing samples/conditions

- previous application knowledge

- seeing vs. detecting defects.

When to consider vision inspections

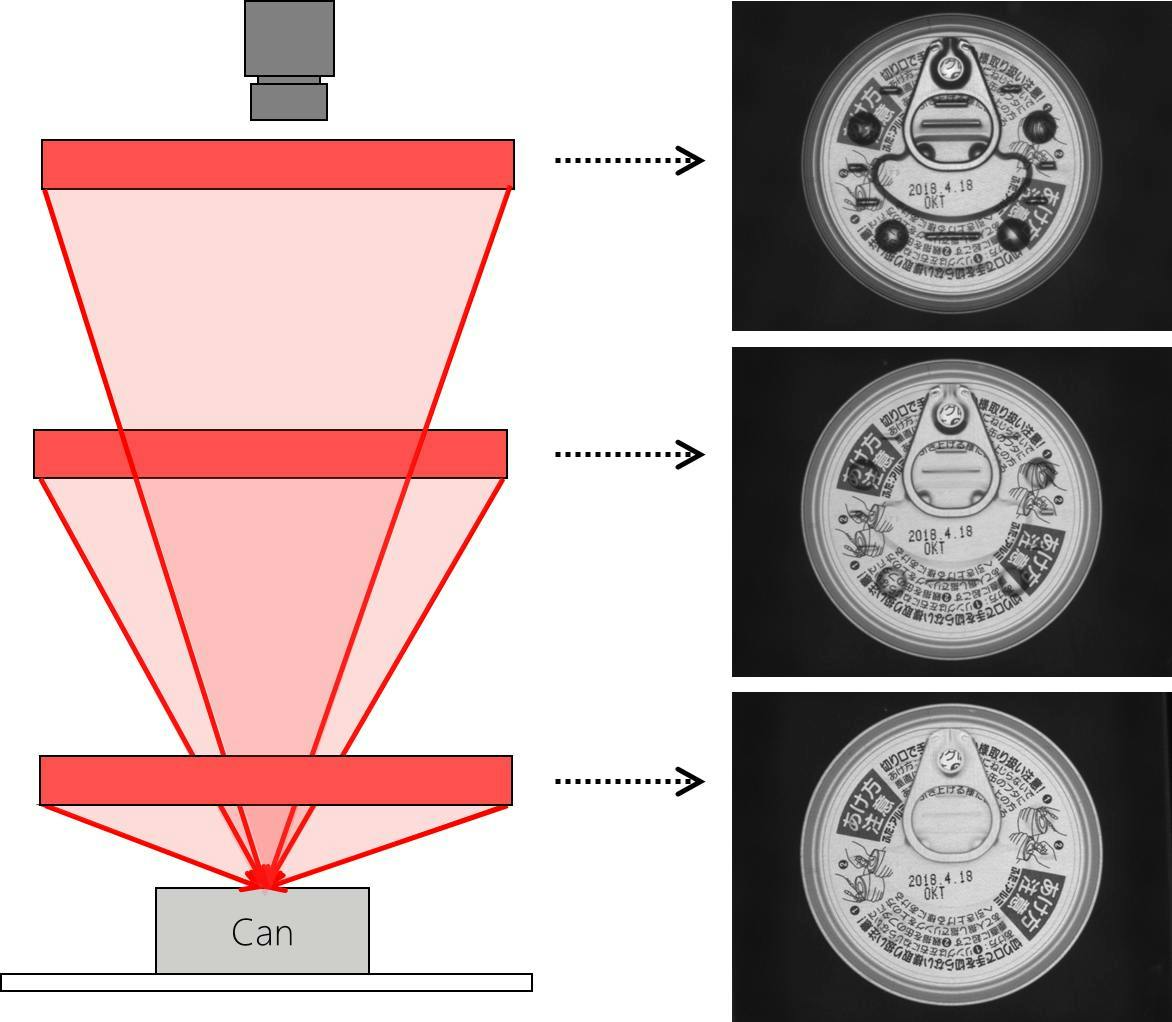

A major consideration is clearance for the lighting and camera. Light working distance (LWD) can make or break an application. Whether you need to install the light close to the object or further away to get the optimal image, if there are physical restrictions that prevent you from installing the light where it needs to be, that optimal image is impossible to capture (Figure 1).

Bad project scope

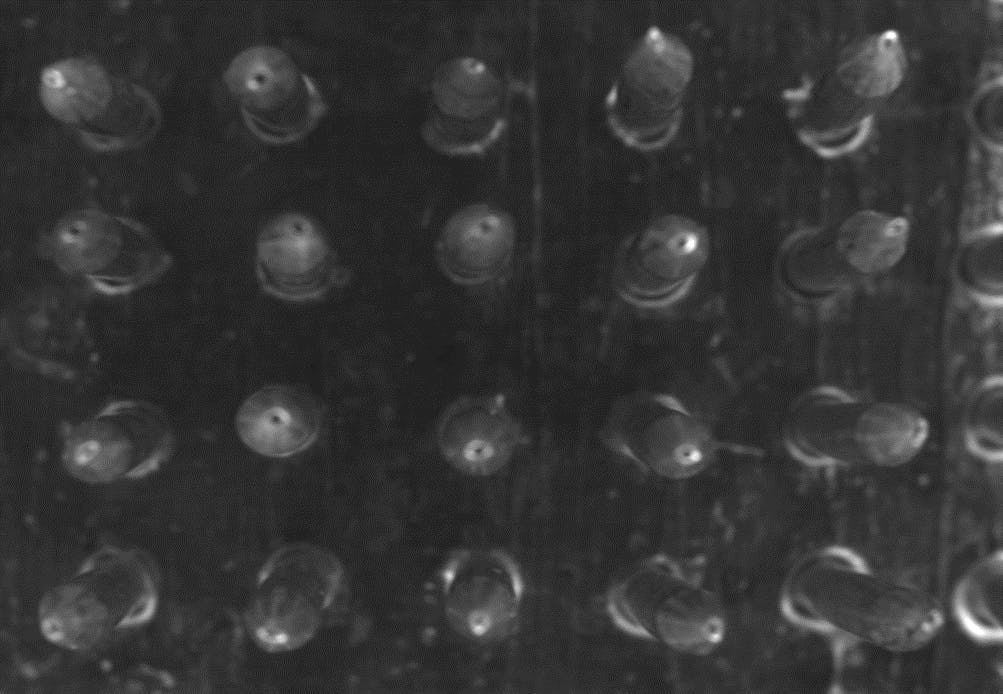

There is a similar nuance to inspecting with a field of view that is too large. Clear presence/absence applications can be easily solved with a flood light, but applications that require high-precision lighting and have larger FOV encounter difficulties. Many standard precision light form factors max out at 300 mm size, so the FOV must be smaller than that for the application to work. Having too many samples in one image is a similar problem. Optical components can change the viewing angle of samples depending on where they are in the image. To get a more consistent angle, you need to match the size of the FOV to the telecentric lens (Figure 2).

Testing samples/conditions

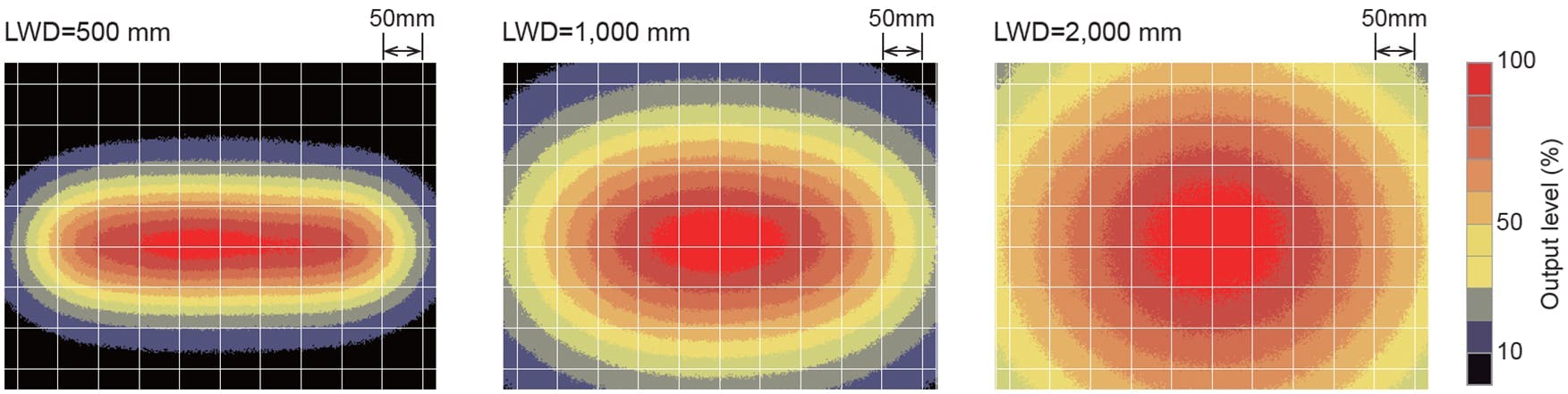

When a customer asks for a lighting recommendation based on a light’s lux or lumens, that tells us they have misconceptions about testing conditions. Because choosing the right light is not that straightforward. First, a light’s irradiance decreases as the LWD increases. Not only that, the irradiance uniformity over the area it illuminates changes size and shape, as well. Your sample’s characteristics also affect how it interacts with light. Dark samples absorb more light, while bright samples reflect more. Instead of choosing a light based on its intensity, the better approach is to first test that a light’s form factor, size and wavelength solve the application and then consult with the manufacturer about overdriving the light or purchasing a strobe-version of the light to get the brightness the application needs (Figure 3).

Previous application knowledge

Seeing vs. detecting defects

About the Author

Lindsey Sullivan

Lindsey Sullivan spent eight years as an engineer evaluating machine vision applications in every industry. She started as a sales engineer at Keyence before moving to an application engineer at CCS America. In her current role as technical marketing manager, she is sharing her expertise so that industrial automation companies can design high-accuracy systems without troubleshooting lighting challenges after machines are built. Contact her at [email protected].